This is a project to replicate 19th century African artefacts currently held by the Royal Greenwich Heritage Trust.

The workflow is: scan, 3d print and then authentically finish these historical items; an East African carved wooden headrest, a series of wooden hair combs, and bronze horseman sculptures. Together, these objects offer a range of material and surface qualities, from worn hardwood to aged metal, making them ideal for testing the full photogrammetry‑to‑copy workflow.

Rather than aiming to produce pristine, as-new replicas, this project focuses on how digital and fabrication processes can retain – and even amplify – traces of age, handling, and material character present in the original objects.

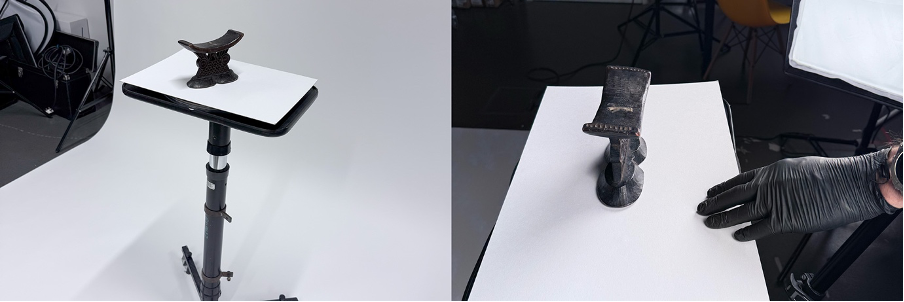

Photogrammetric Capture

All artefacts are captured using DSLR‑based photogrammetry. This approach allows for flexible image capture that adapts well to differing shapes and surface finishes.

Each object is photographed from all angles using a consistent lighting setup, ensuring strong image overlap and even exposure. Particular care taken with the bronze horseman, where reflective highlights can easily interfere with surface reconstruction. Diffuse lighting and careful camera positioning help minimise glare while still preserving subtle form and texture.

The carved wooden artefacts – the headrest and combs – are more straightforward, but the shallow relief and worn surfaces still require dense coverage to avoid soft or ambiguous geometry in the resulting models. In practice, capturing more images than strictly necessary consistently results in more reliable reconstructions.

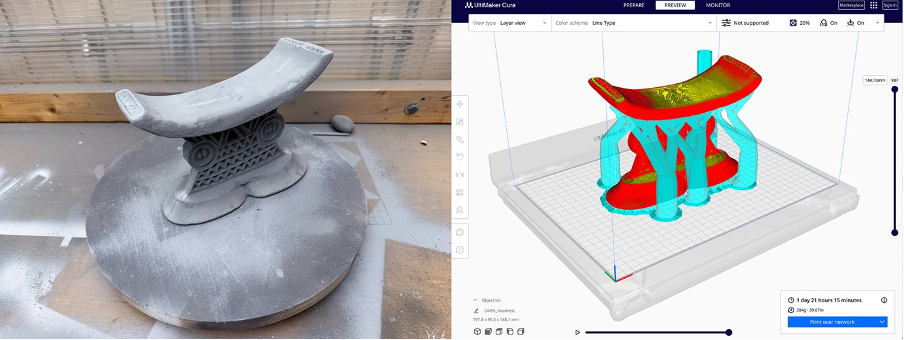

Digital modelling and cleanup

The image sets are processed, via the photogrammetry software Reality Capture, into dense 3D meshes, followed by light digital cleanup in 3d editing software Blender. This stage is kept light – occlusion holes and obvious artefacts are addressed, but surface irregularities are largely preserved. This reflects an photogrammetry not as a means of achieving visual perfection, but as a way of retaining material history. Minor distortions introduced through capture can echo the wear already present in physical artefacts. Excessive smoothing risks replacing these qualities with an artificial digital uniformity.

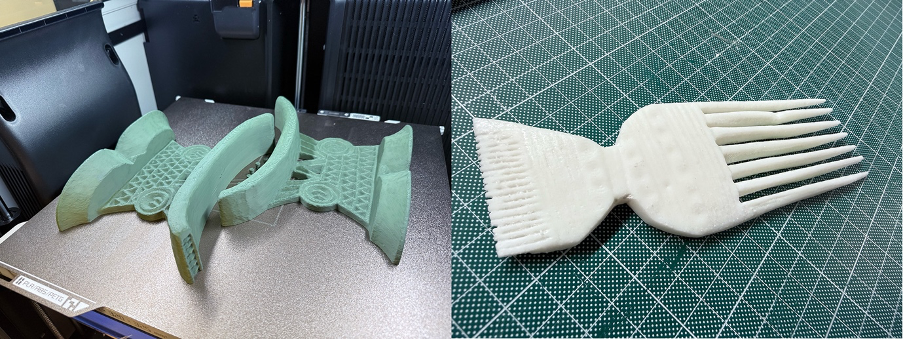

Fabrication: choosing different printing processes

Each artefact here is fabricated using a different 3D printing technology, selected based on form, scale, and desired surface qualities.

The bronze horseman is printed on a nylon SLS (powder) printer. Due to its size, the model is produced in multiple parts and later reassembled. The slightly grainy surface typical of SLS prints becomes an advantage rather than a problem here, closely resembling the texture of cast metal.

The wooden combs are produced using a resin printer. This process builds objects by curing layers of liquid resin from the bottom up, resulting in extremely smooth surfaces once the supports are removed. This makes it particularly well‑suited to the fine teeth and delicate detailing of the combs.

The headrest is printed on a more conventional FDM printer, which extrudes molten plastic in layers, building the object up gradually. It is printed in two halves and then fixed together. The visible layering produced by this method echoes carved tool marks, making it a surprisingly appropriate choice for a wooden object.

Surface preparation

Before finishing, all prints undergo a combination of sanding, cleaning, and under‑coating. Preparation varies depending on material, but the intention is never to eliminate surface traces entirely.

Light sanding removes sharp artefacts while leaving enough texture to contribute to realism. Some pieces receive a single coat of filler primer, which is then sanded back so that it remains primarily in deeper print lines. This softens the surface without erasing its character.

Painting and finishing: simulating aged wood

The headrest and combs are finished using layered paint techniques designed to suggest aged hardwood rather than freshly finished timber.

A dark, warm brown base coat provides an initial foundation. From here, multiple diluted washes are applied, allowing pigment to settle into recesses and carved details. Raised areas are selectively blotted back, reducing the “plastic” appearance of the prints. Some judicious use of a nail to physically carve deeper into some grooves too.

Very restrained dry‑brushing is used to pick out edges and ridges. The emphasis here is subtlety – highlights that are obvious quickly undermine the illusion of age.

To finish, a neutral shoe polish is applied to areas that would naturally be handled. Buffed gently, this creates a soft, uneven sheen associated with skin oils and long‑term use, rather than a uniform varnish.

Painting and finishing: the bronze horseman

The bronze horseman follows a different finishing approach. Rather than painting metallic colour directly, the model is first coated in matte black. This acts as a shadow base, ensuring that any paint missed in recesses reads as depth rather than exposed plastic.

Metallic colour is then introduced using Antique Gold Rub ’n Buff, applied through a dry‑brushing process so that pigment catches only on raised surfaces. This technique works particularly well with the subtle grain of the SLS print, creating broken highlights that resemble cast metal rather than smooth paint.

Age and wear are introduced through thin brown and ochre washes, which are allowed to pool in crevices before being partially wiped back from high points. This layering process avoids a uniformly “dirty” appearance and instead produces variation consistent with long‑term exposure and handling.

A final light touching of Rub ‘n Buff to the tips and sharp edges of the horseman to add the glint to these ends.

Conclusion

The resulting prints are not intended to replace the artefacts they are based on. Instead, they offer a way to examine how historic objects can be translated into contemporary materials while still retaining evidence of use, age, and making.